|

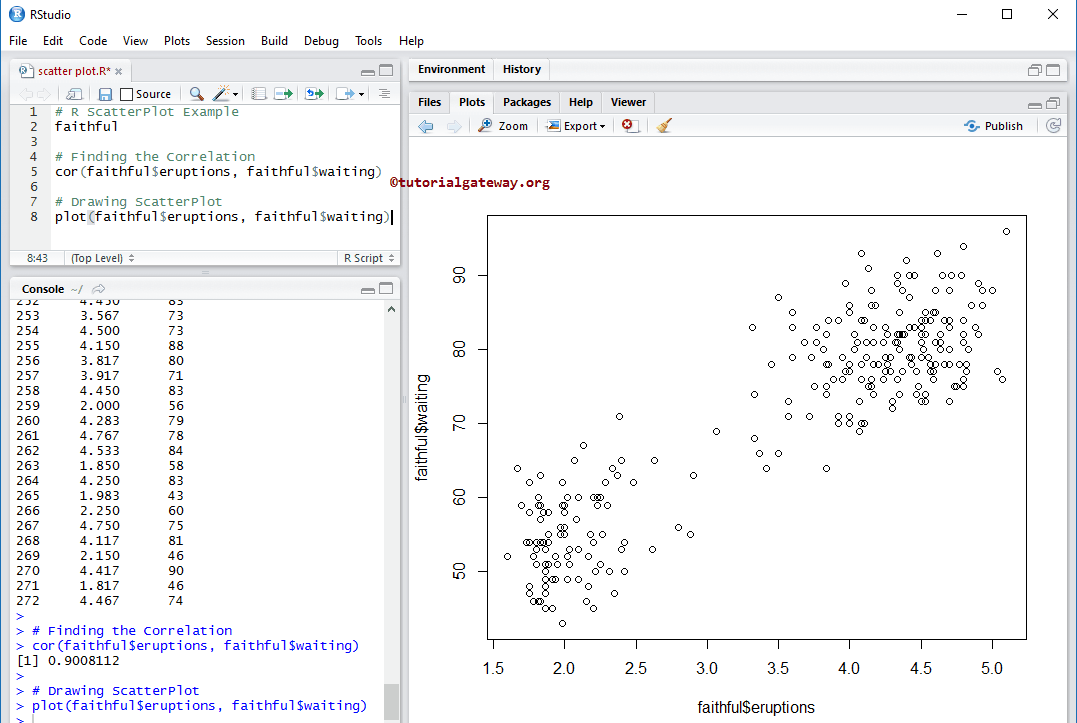

11/6/2022 0 Comments R help cplot multinom  Reference alternative being normalized to 1. Parameter for J - 1 alternatives, the scale parameter for the J - 1 extra coefficients are estimated that represent the scale If heterosc=TRUE, the heteroscedastic logit model is estimated. The basic multinomial logit model and three important extentions of The model is estimated using the mlogit.optim(). Note that it is not necessary to indicate theĬhoice argument as it is deduced from the formula. Some supplementary arguments should be provided and are passed to The data argument may be an ordinary ame. R help cplot multinom how to#Index: the index of the choice and of the alternatives.įor how to use the formula argument, see Formula(). Gradient: the gradient of the log-likelihood at convergence,Įst.stat: some information about the estimation (time used,Įxpanded.formula: the formula (a formula object), Hessian: the hessian of the log-likelihood at convergence, Further arguments passed to mlogit.data orĪn object of class "mlogit", a list with elements:Ĭoefficients: the named vector of coefficients, Import .classification.LogisticRegression import .classification.LogisticRegressionModel import .classification.LogisticRegressionTrainingSummary import .Dataset import .Row import .SparkSession // Load training data Dataset training = spark. weightedRecall println ( s "Accuracy: $accuracy\nFPR: $falsePositiveRate\nTPR: $truePositiveRate\n" s "F-measure: $fMeasure\nPrecision: $precision\nRecall: $recall" ) weightedPrecision val recall = trainingSummary. weightedFMeasure val precision = trainingSummary. weightedTruePositiveRate val fMeasure = trainingSummary. weightedFalsePositiveRate val truePositiveRate = trainingSummary. accuracy val falsePositiveRate = trainingSummary. objectiveHistory () for ( double lossPerIteration : objectiveHistory ) val accuracy = trainingSummary. double objectiveHistory = trainingSummary. binarySummary () // Obtain the loss per iteration.

Import .classification.BinaryLogisticRegressionTrainingSummary import .classification.LogisticRegression import .classification.LogisticRegressionModel import .Dataset import .Row import .SparkSession import .functions // Extract the summary from the returned LogisticRegressionModel instance trained in the earlier // example BinaryLogisticRegressionTrainingSummary trainingSummary = lrModel. coefficientMatrix () "\nMultinomial intercepts: " mlrModel. println ( "Multinomial coefficients: " lrModel. fit ( training ) // Print the coefficients and intercepts for logistic regression with multinomial family System. setFamily ( "multinomial" ) // Fit the model LogisticRegressionModel mlrModel = mlr. intercept ()) // We can also use the multinomial family for binary classification LogisticRegression mlr = new LogisticRegression (). coefficients () " Intercept: " lrModel. fit ( training ) // Print the coefficients and intercept for logistic regression System. setElasticNetParam ( 0.8 ) // Fit the model LogisticRegressionModel lrModel = lr. load ( "data/mllib/sample_libsvm_data.txt" ) LogisticRegression lr = new LogisticRegression (). Import .classification.LogisticRegression import .classification.LogisticRegressionModel import .Dataset import .Row import .SparkSession // Load training data Dataset training = spark. $\alpha$ and regParam corresponds to $\lambda$.

Models for binary classification with elastic net regularization. The following example shows how to train binomial and multinomial logistic regression Binomial logistic regressionįor more background and more details about the implementation of binomial logistic regression, refer to the documentation of logistic regression in spark.mllib. This behavior is the same as R glmnet but different from LIBSVM. When fitting LogisticRegressionModel without intercept on dataset with constant nonzero column, Spark MLlib outputs zero coefficients for constant nonzero columns. It will produce two sets of coefficients and two intercepts. Multinomial logistic regression can be used for binary classification by setting the family param to “multinomial”. Parameter to select between these two algorithms, or leave it unset and Spark will infer the correct variant. In spark.ml logistic regression can be used to predict a binary outcome by using binomial logistic regression, or it can be used to predict a multiclass outcome by using multinomial logistic regression. It is a special case of Generalized Linear models that predicts the probability of the outcomes. Logistic regression is a popular method to predict a categorical response. It also includes sectionsĭiscussing specific classes of algorithms, such as linear methods, trees, and ensembles. This page covers algorithms for Classification and Regression.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed